Deconstructing Generative AI: The Core Algorithms Explained Simply for Beginners

Welcome to the official launch of Mastering AI Tech, my primary global platform for providing information about AI and tech. You've come to the right place. Please read my article.

Deconstructing Generative AI: Understanding the Magic Behind Creation

There's a lot of chatter these days about Generative AI, and honestly, it can feel a bit like magic, can't it? We see incredible images, compelling text, and even music conjured seemingly out of thin air. But what's really going on under the hood? If you've ever wondered How Does Generative AI Work? A Simple Explanation for Beginners is exactly what I'm here to provide. I'll pull back the curtain on the core algorithms that make all this possible, demystifying the technology without getting lost in overly technical jargon.

Key Takeaways

- Generative AI creates new, original content: Unlike analytical AI that classifies or predicts, generative models are designed to produce novel outputs like images, text, or audio based on patterns learned from vast datasets.

- Core algorithms are like creative apprentices: Models such as Generative Adversarial Networks (GANs) and Transformers are foundational. GANs learn through a "game" between two neural networks, while Transformers excel at understanding context and relationships in sequential data, especially language.

- Understanding the basics empowers you: Grasping these fundamental concepts helps business owners, content creators, and the general public better utilize and critically assess the capabilities and limitations of this rapidly evolving technology.

What Exactly is Generative AI?

Before we dive into the nitty-gritty, let's nail down what we mean by "generative." Think about it this way: most of the AI we've encountered historically has been about analysis. It identifies objects in photos, flags spam emails, or recommends products based on your past behavior. These systems are brilliant at understanding existing data.

Generative AI, on the other hand, is a different beast entirely. It's about creation. It doesn't just recognize a cat; it can draw a new cat that's never existed before. It doesn't just understand human language; it can write a poem, draft an email, or even craft an entire story.

The key here is originality. These AI models aren't just copying and pasting. They're learning underlying patterns, structures, and styles from massive datasets, and then using that knowledge to generate entirely new examples that fit those learned characteristics. It's a bit like a highly skilled apprentice who, after studying thousands of masterpieces, can then produce their own unique works in a similar style.

How Does Generative AI Work? The Fundamental Building Blocks

At its heart, generative AI relies on sophisticated artificial neural networks. These networks, inspired by the human brain, are incredibly good at recognizing complex patterns. For generative tasks, they take things a step further, learning not just to identify patterns, but to replicate them in novel ways.

Imagine teaching a child to draw. You show them thousands of pictures of houses, trees, and people. Eventually, they don't just recognize a house; they can draw their own version, combining elements they've seen in new arrangements. Generative AI models operate on a similar principle, albeit with vastly more data and computational power.

The magic often lies in the training process. These models are fed enormous quantities of data – images, text, audio – and they learn to discern the statistical relationships and structures within that data. They then use this learned understanding to produce outputs that are statistically similar to the training data, yet novel. It’s a fascinating dance between imitation and innovation.

The Role of Neural Networks in AI Creation

If you're new to this, a neural network might sound intimidating. Simply put, it's a series of interconnected nodes, or "neurons," organized in layers. Data enters the first layer, is processed through hidden layers, and then an output emerges from the final layer. Each connection has a "weight," and these weights are adjusted during training to improve the network's performance.

For generative tasks, these networks become incredibly adept at capturing the nuances of a dataset. They can learn the brushstrokes of a famous painter, the grammatical rules of a language, or the harmonic structures of a musical genre. This deep understanding is what allows them to create.

Without these powerful computational structures, the sheer complexity of generating coherent, high-quality content would be impossible. They are the engine driving the creative capabilities we see today.

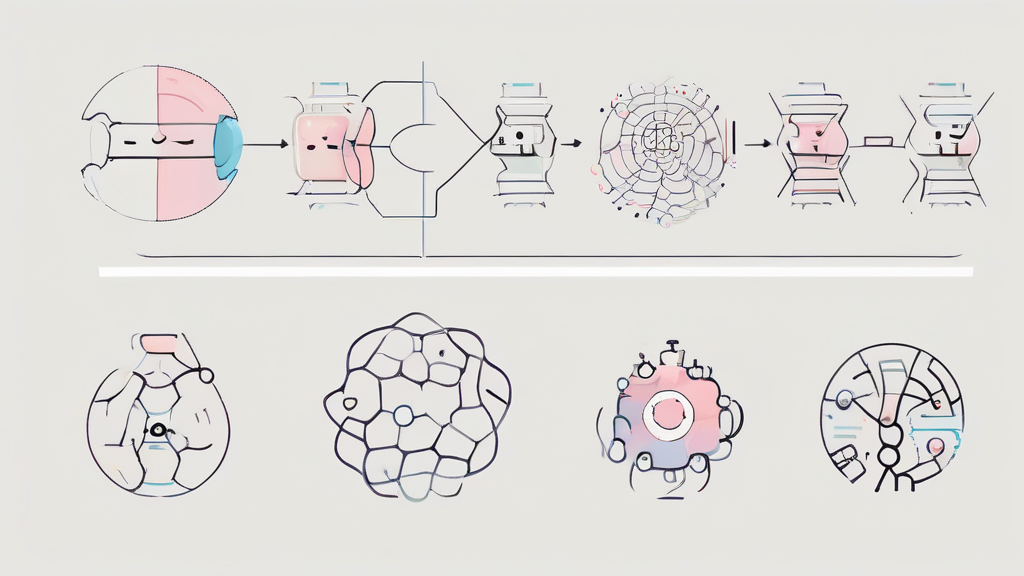

Generative Adversarial Networks (GANs): The Art of Deception

One of the most groundbreaking developments in generative AI came with the introduction of Generative Adversarial Networks, or GANs. Invented by Ian Goodfellow and his colleagues in 2014, GANs introduced a brilliant, almost playful, approach to machine learning. Think of it as a continuous game between two competing neural networks.

Imagine a counterfeiter trying to print fake money and a detective trying to spot the fakes. Both get better over time. The counterfeiter learns to make more convincing fakes, and the detective becomes more adept at identifying even subtle flaws. This is the core idea behind GANs.

This adversarial process is what drives the incredible quality of GAN-generated content, from hyper-realistic faces to new fashion designs. It's a testament to how competition can foster creativity, even in algorithms.

Inside the Generator and Discriminator

A GAN consists of two primary components:

- The Generator: This is the creative artist. Its job is to take random noise (just a bunch of meaningless numbers) and transform it into something that looks like the real data. If you're training a GAN to create images of cats, the generator will try to produce cat-like images from random input.

- The Discriminator: This is the shrewd critic, the detective. Its job is to look at an image and decide if it's a real image (from the training dataset) or a fake image (generated by the generator). It's essentially a binary classifier.

These two networks are constantly battling it out. The generator wants to fool the discriminator, and the discriminator wants to correctly identify the fakes. It's a zero-sum game where one network's gain is the other's loss, pushing both to improve iteratively.

Training GANs: A Game of Cat and Mouse

The training process for GANs is fascinating. Here's a simplified breakdown:

- The generator creates a batch of "fake" data (e.g., fake images).

- The discriminator is shown a mix of these fake data points and "real" data points from the actual training dataset.

- The discriminator tries to correctly classify each item as real or fake.

- Based on the discriminator's performance, both networks update their internal parameters:

- If the discriminator correctly identified a fake, the generator learns how to make its fakes more convincing next time.

- If the discriminator was fooled by a fake, it learns to be more discerning.

This cycle repeats thousands, even millions, of times. Over time, the generator becomes incredibly good at producing data that is indistinguishable from the real thing, while the discriminator becomes a highly skilled authenticator. Eventually, the generator is so good that the discriminator can only guess whether an image is real or fake, achieving roughly 50% accuracy. That's when you know your generator is truly powerful.

For a deeper dive into the mathematical underpinnings, you might want to check out the Wikipedia article on Generative Adversarial Networks.

Transformers: The Language Architects

While GANs revolutionized image generation, another architecture, the Transformer, has completely reshaped the world of natural language processing (NLP) and is now making waves in other domains too. If you've interacted with AI chatbots that write coherent emails or summarize complex documents, you've likely encountered a Transformer in action.

Introduced by Google in 2017 with the paper "Attention Is All You Need," Transformers moved away from older recurrent neural network (RNN) architectures that processed information sequentially. Instead, they can process entire sequences of data (like sentences or paragraphs) at once, making them incredibly efficient and powerful.

Their ability to understand context and relationships across long stretches of text is what makes them so good at tasks like translation, summarization, and, of course, text generation. It's like they can read an entire book and grasp its overarching themes, rather than just understanding one sentence at a time.

The Power of Attention Mechanisms

The secret sauce of Transformers is something called the "attention mechanism." Imagine you're reading a long, complex sentence. As you read each word, your brain doesn't just focus on that single word; it also considers how it relates to other words in the sentence. For instance, in "The quick brown fox jumps over the lazy dog," when you read "jumps," you implicitly connect it to "fox" as the subject doing the jumping.

The attention mechanism in Transformers works similarly. When processing a word, it assigns different levels of "attention" or importance to other words in the input sequence. This allows the model to weigh the relevance of different parts of the input, creating a rich contextual understanding. This is crucial for understanding nuances, ambiguities, and long-range dependencies in language.

It’s this ability to dynamically focus on relevant parts of the input that allows Transformers to handle complex linguistic structures with unprecedented accuracy and fluency. They don't just see words; they see how words interact.

Training Transformers for Creativity

Transformers are typically trained on colossal datasets of text, often billions of words scraped from the internet. During this training, they learn to predict missing words in a sentence or the next word in a sequence. For example, if you show it "The cat sat on the...", it learns that "mat" or "rug" are likely completions.

This process builds an incredibly sophisticated statistical model of language. When you then ask a Transformer to generate text, it essentially uses this learned knowledge to predict the most probable next word, and then the next, and so on, until it forms a coherent and contextually appropriate output. It's like having an incredibly well-read author who can mimic virtually any style or topic.

Models like OpenAI's GPT series (Generative Pre-trained Transformer) are prime examples of this architecture's generative capabilities. They've been trained on vast swathes of human knowledge, allowing them to produce text that is often indistinguishable from human-written content. If you're curious about the general concept of attention in neural networks, you can find more details on Wikipedia's page on Attention (machine learning).

Beyond GANs and Transformers: Other Approaches

While GANs and Transformers are certainly the superstars of generative AI, they aren't the only players on the field. The field is constantly evolving, with new architectures and techniques emerging regularly. It's a vibrant area of research and development.

Variational Autoencoders (VAEs)

Before GANs, Variational Autoencoders (VAEs) were a popular method for generative tasks. VAEs work by learning a compressed representation (a "latent space") of the input data. They try to encode an input into this latent space and then decode it back to reconstruct the original input as accurately as possible.

The "variational" part comes from adding a constraint that the latent space should follow a particular statistical distribution (like a normal distribution). This allows us to sample from this learned distribution and generate new, similar data. VAEs tend to produce outputs that are smoother and less sharp than GANs, but they offer more control over the generated content.

Diffusion Models

One of the most exciting recent developments has been Diffusion Models. These models work by taking an image (or other data) and gradually adding random noise to it until it becomes pure noise. Then, during generation, they learn to reverse this process, slowly denoising the random input until a coherent image emerges.

Think of it like starting with a static-filled TV screen and slowly, pixel by pixel, clearing away the noise until a beautiful picture forms. Diffusion models have shown incredible results, often surpassing GANs in image quality and diversity, and are behind many of the stunning AI art generators we see today.

The Impact of Generative AI: What It Means for You

Generative AI isn't just a cool tech demo; it's rapidly becoming a practical tool across various industries. For online business owners, content creators, and everyday folks, understanding these capabilities is no longer optional. It's about staying ahead.

From automating mundane tasks to sparking entirely new creative avenues, the implications are vast. We're talking about tools that can draft marketing copy, design website layouts, generate product images, or even help you brainstorm ideas faster than ever before. This technology is changing the landscape of digital creation.

Practical Applications for Business Owners

For those running a business, Generative AI offers some compelling opportunities:

- Content Creation: Generate blog posts, social media updates, email newsletters, or product descriptions in minutes, freeing up valuable time.

- Marketing & Advertising: Create personalized ad copy, generate unique visuals for campaigns, or even design entire marketing materials.

- Product Design: Rapidly prototype new product designs, generate variations of existing products, or even create synthetic data for testing.

- Customer Service: Power more sophisticated chatbots that can provide detailed, context-aware responses to customer queries, improving satisfaction.

- Personalization: Tailor experiences for individual customers, from custom landing pages to personalized recommendations, at scale.

The potential for efficiency gains and innovation is enormous. It's about augmenting human creativity and productivity, not replacing it entirely. Many forward-thinking companies are already integrating these tools into their workflows, seeing real returns.

Ethical Considerations and the Future

Of course, with great power comes great responsibility. Generative AI also brings significant ethical considerations. Issues like deepfakes, copyright infringement, algorithmic bias, and the potential for job displacement are all serious concerns that we, as a society, need to address.

As these models become more sophisticated, the line between human-created and AI-generated content will blur further. This necessitates critical thinking and the development of robust ethical guidelines and regulations. We're still very early in this journey, and the future will require careful navigation.

However, the trajectory is clear: Generative AI will continue to evolve at a breathtaking pace. New architectures, more efficient training methods, and increasingly powerful models are on the horizon. My belief is that understanding the core principles, like those behind GANs and Transformers, provides a solid foundation for engaging with this future responsibly and effectively.

The Core of Generative AI: It's not magic, but a sophisticated interplay of algorithms learning patterns to create novelty. From the adversarial dance of GANs producing realistic images to the contextual mastery of Transformers crafting compelling text, these models are reshaping our digital world. Embracing this understanding is key to leveraging their immense potential responsibly.

Conclusion: Your Gateway to Generative Possibilities

We've peeled back the layers today, moving from the mystique surrounding Generative AI to a clearer understanding of its fundamental components. You now have a grasp of how Generative AI works, from the competitive learning of GANs to the context-aware brilliance of Transformers, and even a peek at emerging models like Diffusion. It's not just about the technical jargon; it's about appreciating the ingenuity that allows machines to create.

This isn't just abstract knowledge; it's a practical foundation. Whether you're a business owner looking to streamline operations, a creative professional seeking new tools, or simply someone curious about the technological shifts around us, understanding these core algorithms empowers you. It helps you assess what's possible, what's hype, and how you can ethically and effectively integrate these powerful capabilities into your work and life.

The generative AI landscape is evolving rapidly, but the principles we've discussed today remain crucial. So, go forth, experiment, and explore the incredible possibilities that generative AI offers. The future of creation is here, and you're now better equipped to be a part of it.

Frequently Asked Questions (FAQ)

What's the main difference between Generative AI and traditional AI?

Traditional AI typically focuses on analysis, classification, and prediction based on existing data. Generative AI, conversely, is designed to create entirely new, original content—like images, text, or audio—that didn't exist before, by learning patterns from vast datasets.

Are Generative AI models truly "creative"?

While Generative AI can produce novel and impressive outputs, its "creativity" stems from its ability to learn and combine patterns from its training data in new ways, rather than possessing consciousness or intentional artistic expression like humans. It's a sophisticated form of algorithmic synthesis.

Can Generative AI replace human jobs?

Generative AI is more likely to augment human capabilities rather than fully replace jobs. It excels at automating repetitive or initial creative tasks, freeing up humans to focus on higher-level strategy, critical thinking, ethical oversight, and unique creative direction that AI cannot replicate. It's a tool to enhance productivity and open new possibilities.

As artificial intelligence continues to redefine what's possible in the digital space, staying informed and adaptable is your greatest advantage. Mastering AI Tech is deeply committed to evolving alongside these technological breakthroughs, ensuring you always have access to the best resources, technical guidance, and clear industry insights. Take a moment to bookmark this site, explore our upcoming foundational guides, and get ready to enhance your digital skills. The future of technology is already here, and together, we will master it. Leave a comment if you found this informative article helpful. THANK YOU

Post a Comment for "Deconstructing Generative AI: The Core Algorithms Explained Simply for Beginners"